Facebook Knows Your Face Better Than You Do

"DeepFace" can pick you out of a crowd with disturbing accuracy.

Credit:

Credit:

Recommendations are independently chosen by Reviewed's editors. Purchases made through the links below may earn us and our publishing partners a commission.

Day by day, Facebook's cache of personal data grows ever larger, and the company isn't sitting idly atop its stockpile. This week, Facebook published an update about its powerful facial verification software, DeepFace, which allows company servers to identify individuals in photographs with impressive accuracy.

The software's chuckle-inducing name derives from a type of artificial intelligence (AI) known as "Deep Learning"—a system that involves feeding an algorithm massive amounts of data and training it to identify patterns in given images, sounds, and so on.

In Facebook's case, the massive treasure trove of user data consists of voluntarily uploaded photographs—many of them selfies. Facebook explained that out of 4 million facial images of over 4,000 people, DeepFace can identify and match photographs of individuals—regardless of shadows, position of the head, and other complicating factors—with 97.25% accuracy. According to Facebook, this rate of success "[reduces] the error of the current state-of-the-art by more than 25%."

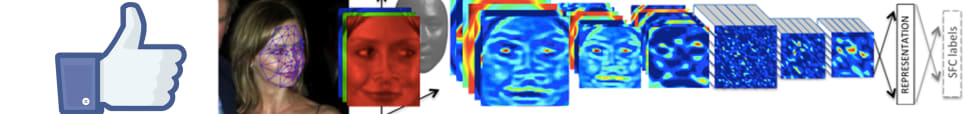

So how does DeepFace work? Let's say a computer is given a photo of a woman facing slightly to the left. DeepFace maps her features in 3D and virtually turns her head forwards. The software then analyzes and produces a "numeric map" of that forward-facing countenance and searches for other patterns in the database that match. When two patterns indicate highly similar models, a match is found.

The most obvious application of this technology is to make automatic photo tagging quicker and more efficient, but it's possible that Facebook could highlight additional use cases when it presents its research at the IEEE Conference on Computer Vision and Pattern Recognition this June.